How M-GRPO Transforms Large Language Model Collaboration

Multi-Agent Deep Research: How M-GRPO Transforms Large Language Model Collaboration

Introduction

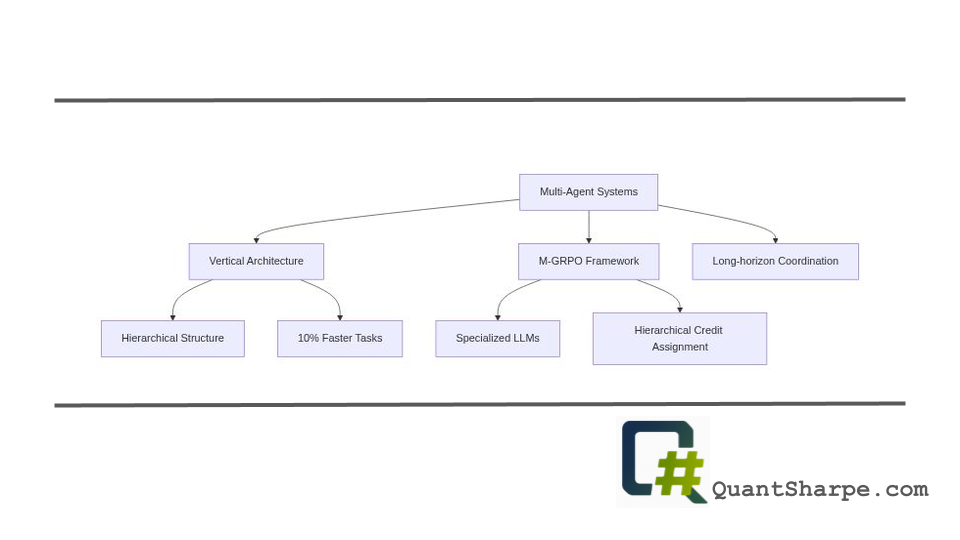

Researchers from Ant Group and Imperial College London introduced a significant advancement in multi-agent artificial intelligence: Multi-Agent Group Relative Policy Optimization (M-GRPO)[2][4]. Led by Haoyang Hong, Jiajun Yin, Yuan Wang, and a team of 14 co-authors, this work addresses a fundamental challenge in modern AI systems - how to effectively train multiple specialized large language models to work together on complex, real-world tasks. Rather than relying on a single unified model to handle all aspects of a problem, M-GRPO enables distinct LLMs to develop specialized expertise while maintaining coherent system-level coordination. The implications of this research extend far beyond academic interest, touching on the practical architecture of AI assistants that will tackle increasingly sophisticated problems requiring multi-step reasoning and tool integration.

Historical Context: From Single Agents to Collaborative Systems

To fully appreciate the significance of M-GRPO, we must understand the evolution of both multi-agent systems and reinforcement learning methodologies that led to this breakthrough.

The Rise of Multi-Agent Architectures

For decades, artificial intelligence research predominantly focused on single-agent systems - monolithic models attempting to solve problems independently. This approach proved adequate for narrow, well-defined tasks but faltered when confronted with complex, real-world challenges requiring coordination across multiple domains or perspectives. The limitations became apparent as researchers encountered problems that demanded simultaneous handling of planning, tool use, verification, and synthesis - capabilities difficult for any single model to excel at simultaneously.

Multi-agent systems emerged as a conceptual response to these constraints. Rather than concentrating intelligence in a single entity, multi-agent architectures distribute problem-solving responsibility across multiple specialized agents that communicate and coordinate to achieve system-level objectives. This paradigm shift reflected a fundamental recognition: many real-world problems are inherently distributed, requiring diverse capabilities working in concert.

The Reinforcement Learning Foundation

Parallel to the development of multi-agent concepts, reinforcement learning (RL) has undergone its own transformation. Traditional policy gradient methods like PPO (Proximal Policy Optimization) revolutionized the field by enabling stable, sample-efficient learning. However, these methods were fundamentally designed for single-agent scenarios. When applied to multi-agent settings, conventional approaches exhibit critical weaknesses: they struggle with credit assignment problems, suffer from instability in non-stationary environments where other agents are simultaneously learning, and often fail to leverage the structure inherent in cooperative or hierarchical agent relationships.

The emergence of Group Relative Policy Optimization (GRPO) represented progress on this front, introducing group-normalized advantage estimation that stabilizes training by normalizing rewards within agent groups[1]. However, GRPO remained limited in its ability to handle vertical multi-agent architectures - hierarchical systems where specialized agents operate at different frequencies with variable computational requirements.

The Problem M-GRPO Solves: Specialized LLMs in Vertical Hierarchies

Current approaches to multi-agent LLM systems face a critical architectural dilemma. Many existing systems deploy a single unified LLM across all agents, which creates a fundamental constraint: all agents share identical parameters and capabilities, preventing the emergence of specialized expertise. Conversely, attempts to use distinct LLMs for different roles encounter severe optimization challenges that previous methods could not adequately address.

M-GRPO targets these specific challenges directly[2]. Consider a practical scenario: a research assistant system where one agent (the main agent) decomposes user queries and coordinates subtasks, while specialized sub-agents handle different roles - perhaps one excels at web search and information retrieval, another at logical reasoning, and a third at code execution. These agents operate at fundamentally different frequencies. The main agent might generate multiple planning steps while a sub-agent executes a single tool call. Some queries might invoke certain sub-agents multiple times while never calling others.

Traditional RL methods break down in this scenario for several reasons:

Trajectory Heterogeneity: Each rollout produces trajectories of vastly different structures and lengths. Computing absolute advantages (as in standard PPO) becomes meaningless when one trajectory represents a simple planning step and another represents a complex multi-step tool execution.

Credit Assignment Complexity: Determining which agent deserves credit for good outcomes becomes genuinely ambiguous. Did success result from the main agent’s superior planning or the sub-agent’s effective execution? Did poor performance stem from bad delegation or poor execution?

Gradient Flow Disruption: When agents are deployed across separate servers - a practical requirement for scaling - computing gradients across the entire system becomes computationally prohibitive and introduces communication bottlenecks.

Non-Stationary Learning Environment: As each agent improves during training, the learning landscape shifts for all others. An agent optimized against yesterday’s sub-agent performance may now be suboptimal.

Deep Technical Analysis: How M-GRPO Works

M-GRPO’s elegance lies in how it addresses these challenges through a carefully designed technical approach[3].

Hierarchical Credit Assignment Through Group-Relative Advantages

The core innovation centers on computing group-relative advantages specifically designed for hierarchical structures. Rather than computing advantages against a fixed baseline or centralizing advantage computation, M-GRPO normalizes advantages within agent groups while respecting the hierarchical relationships between main and sub-agents.

Formally, for K rollouts on a query q, the system generates trajectories where the main agent has trajectories $\tau_M^{(k)}$ and sub-agents have trajectories ${\tau_{S,i}^{(k)}}$ with potentially different numbers of invocations $d_k$ per rollout. By computing group-relative advantages for both M and S separately while maintaining awareness of their hierarchical relationship, the method enables each agent to receive meaningful credit signals even when trajectories have radically different structures[2][3].

This approach yields an immediate benefit: stability. Because advantages are normalized within groups rather than computed absolutely, training becomes far less sensitive to reward scale variations - a major source of instability in multi-agent settings.

Trajectory Alignment and Variable Invocations

M-GRPO cleverly handles the problem of variable sub-agent invocation counts per rollout through trajectory alignment. Rather than forcing all rollouts into identical structures, the system generates fixed-size batches through masking, duplication, or selective dropping of sub-trajectories[2]. This preserves information about which agents were actually invoked while maintaining computational efficiency in batch processing.

Decoupled Training Pipeline for Scalability

Perhaps most practically important is the decoupled training pipeline that enables training without cross-server backpropagation[2][4]. In this architecture, agents running on separate servers exchange only minimal statistical summaries via a shared parameter store. This represents a fundamental shift from centralized training - instead, each agent optimizes locally while receiving guidance from group-relative statistics. This design enables genuinely scalable training of large multi-agent systems without the communication bottlenecks that plague centralized approaches.

Empirical Validation and Performance Improvements

The practical impact of M-GRPO becomes clear when examining empirical results. Across real-world benchmarks including GAIA, XBench, and WebWalkerQA, M-GRPO demonstrates consistent improvements over baseline approaches[2][4][5].

Co-training Advantages: Experiments demonstrate a clear performance hierarchy: co-training (training both main and sub-agents together) > main-only training (training only the main agent with frozen sub-agents) > single-agent GRPO[3]. This validates two important conclusions: first, multi-agent architectures provide inherent benefits for complex tasks, and second, joint optimization through M-GRPO further magnifies these benefits.

Sample Efficiency: M-GRPO achieves approximately 30% fewer reasoning steps while maintaining or improving accuracy compared to naive GRPO or PPO-based baselines[1]. This efficiency gain has obvious practical implications - faster inference, reduced computational costs, and lower latency in production systems.

Specialized Capability Emergence: Qualitative analysis reveals that agents developed distinct specialization patterns. Rather than converging to homogeneous strategies, the main agent improved at task decomposition and sub-task assignment, while sub-agents specialized in specific reasoning domains or tool usage patterns[6]. This emergent specialization directly validates the system’s design philosophy.

Strengths and Technical Contributions

Addressing Real System Challenges: Unlike much academic RL work that optimizes for idealized settings, M-GRPO directly addresses practical constraints encountered in deployed systems - varying agent frequencies, heterogeneous computational requirements, distributed deployment across servers.

Generalization Across Problem Domains: The research demonstrates effectiveness across diverse benchmarks (logical reasoning, web search, tool use, document understanding), suggesting the framework’s applicability extends broadly rather than working only on narrow domains.

Two-Stage Training Curriculum: The empirical approach of using a two-stage curriculum (first stage on simple data for format learning, second stage on challenging data for collaborative capability development) shows careful experimental design informed by practical deployment experience[3].

Ablation Studies Validating Design Choices: The paper includes ablation studies confirming that both hierarchical credit assignment and trajectory synchronization are essential[1]. This rigorous validation strengthens confidence in the technical approach.

Limitations and Critical Examination

Despite these strengths, several limitations deserve careful consideration.

Limited Theoretical Analysis: While the empirical results are compelling, the paper lacks deep theoretical analysis of convergence properties, sample complexity bounds, or formal guarantees about when and why the method should succeed. Many RL theoretical frameworks assume relatively simple, stationary environments - M-GRPO’s hierarchical, non-stationary setting would benefit from formal theoretical grounding.

Benchmark Selection and Scope: The evaluation focuses on research-oriented tasks (information retrieval, reasoning, tool use). Performance on other multi-agent scenarios - collaborative games, sequential decision-making in dynamic environments, or adversarial settings - remains unclear. The findings might not generalize to all multi-agent problems.

Comparison Baselines: While comparisons against single-agent GRPO and frozen sub-agent approaches are valuable, comparisons with other multi-agent RL methods (QMIX, MADDPG, or other recent multi-agent policy optimization techniques) would strengthen the evaluation.

Scalability Limits: Although the decoupled training pipeline enables distributed training, questions remain about scalability limits. How does performance degrade with dozens or hundreds of agents? The paper evaluates two-agent hierarchies; real-world systems might involve more complex agent topologies.

Hyperparameter Sensitivity: The paper doesn’t thoroughly explore sensitivity to hyperparameters or provide guidance for practitioners on setting group normalization thresholds, trajectory alignment strategies, or curriculum learning parameters when adapting the method to new domains.

Limited Discussion of Failure Modes: The paper lacks candid discussion of scenarios where M-GRPO might underperform or fail. Understanding failure modes would help practitioners avoid deploying the method inappropriately.

Implications for AI System Design

The success of M-GRPO carries important implications extending beyond the narrow technical contribution.

From Monolithic to Modular Intelligence: This work validates a vision of AI systems built from specialized, modular components rather than monolithic models. Just as human organizations achieve complex goals through specialized teams, AI systems may benefit from architectural specialization. This modular approach offers several advantages: different agents can be optimized for specific hardware (CPU-optimized reasoning agents, GPU-accelerated compute agents), specialized agents can be updated independently without retraining entire systems, and failure modes become more localized and manageable.

Tool-Augmented Reasoning at Scale: M-GRPO enables sophisticated tool use at scale. Rather than training a single model to handle planning, tool selection, tool use, and synthesis, the system distributes these responsibilities across agents. This appears to be a particularly promising direction as AI systems increasingly need to interact with external tools, APIs, and information sources.

The Value of Heterogeneity: The research reinforces that heterogeneous multi-agent systems - composed of distinct agents with different capabilities - provide advantages over homogeneous systems. This validates investing in diversity rather than seeking unified, universal models.

Practical Deployment Considerations: The emphasis on decoupled training and distributed deployment suggests a maturation of reinforcement learning from academic benchmark focus toward practical production concerns. This shift toward real-world constraints is healthy for the field.

Future Research Directions

M-GRPO opens several promising research avenues.

Extending Beyond Two-Level Hierarchies: Current work focuses on main agent plus sub-agents. Natural extensions include deeper hierarchies with multiple levels of delegation, or more complex network topologies where agents interact in non-hierarchical patterns.

Theoretical Foundations: Developing convergence proofs, sample complexity bounds, and formal conditions under which M-GRPO guarantees stable learning would significantly strengthen the contribution and guide practitioners in setting hyperparameters.

Heterogeneous Agent Capabilities: While M-GRPO uses distinct LLMs, truly maximizing specialization might involve agents with fundamentally different architectures - combining LLMs with symbolic reasoners, specialized neural networks, or classical algorithms optimized for specific reasoning modes.

Online Adaptation and Non-Stationary Environments: Extending M-GRPO to handle more dynamic environments where agent roles or capabilities shift during deployment would increase practical applicability.

Scaling to Large-Scale Multi-Agent Systems: Investigating how the approach scales to systems with many more agents, evaluating communication efficiency, and developing hierarchical coordination strategies for very large agent teams.

Integration with Other Learning Paradigms: Combining M-GRPO with imitation learning, exploration strategies, or meta-learning approaches might further improve sample efficiency and performance.

Broader Context in Multi-Agent AI and LLMs

M-GRPO arrives at a pivotal moment in AI development where several trends converge.

LLMs as Reasoning Substrates: Recent advances demonstrate that LLMs excel at diverse reasoning tasks when properly prompted and given access to appropriate tools. M-GRPO provides a principled framework for orchestrating multiple LLMs, each contributing specialized reasoning capabilities.

From Single Models to System Architecture: The field increasingly recognizes that progress may come not from scaling individual models, but from architecting better systems of smaller, specialized models. M-GRPO exemplifies this architectural turn.

Tool Use and Grounding: As AI systems increasingly interact with external tools, APIs, and information sources, methods for coordinating tool use across agents become essential infrastructure. M-GRPO directly addresses this need.

Production Deployment: While much RL research remains focused on benchmark performance, M-GRPO’s attention to practical concerns - distributed training, scalability, minimal cross-server communication - reflects the field’s maturation toward production deployment.

Conclusion: A Step Toward Collaborative AI Intelligence

Multi-Agent Group Relative Policy Optimization represents solid technical progress addressing genuine challenges in training cooperative multi-agent systems. The combination of hierarchical credit assignment, trajectory alignment, and decoupled training creates a practical solution to problems that limited previous approaches.

More broadly, the work validates an architectural vision: sophisticated AI systems may be built from specialized, modular agents trained to coordinate effectively. This modular approach offers flexibility, specialization, and robustness properties that monolithic systems cannot achieve.

That said, significant questions remain. The theoretical foundations require strengthening, evaluation on diverse multi-agent scenarios beyond tool-augmented reasoning would build confidence in generalization, and investigation of failure modes would guide practitioners. Scaling beyond two-level hierarchies and integrating with other learning paradigms represent promising future directions.

For researchers working on multi-agent systems, tool-augmented reasoning, or production AI deployment, M-GRPO provides both a practical toolkit and conceptual framework worth studying. For the broader AI community, this work exemplifies the shift toward system-level thinking - recognizing that future advances may come as much from better coordination architectures as from individual model improvements.

Never miss a story from us, subscribe to our newsletter

Never miss a story from us, subscribe to our newsletter